Ten years ago, everything changed.

It was 2011 and I was the CEO of Catholic Charities Fort Worth. I was invited to come to a gathering at Notre Dame to learn more about how “some economists” could help us end poverty. I was intrigued.

The gathering was just a small group—a handful of economists from around the nation and a few of us Catholic Charities CEOs. The point of the gathering was to see if there were ways for nonprofits to work hand-in-hand with economists to understand the ins and outs of what works to eradicate poverty. The economists, all doing well in their careers, shared that the missing piece in their research to understand the impact of poverty interventions was access to real non-profits doing real work with real people.

“Relentless pursuit” best describes what came next. I became obsessed with figuring out how we could partner with Notre Dame to supply this missing piece. I knew this kind of partnership would be the game changer we needed to address poverty in a real way.

In one of my early calls with Jim Sullivan and Bill Evans (who would go on to found LEO on the ideas generated at that small group gathering), they expressed a concern: was I really sure I wanted to risk learning that what we were doing didn’t work? I didn’t mince my words: “Bring it on.”

However, those economists were a skeptical bunch. While they respected my belief in the potential of research and my drive to know what worked and what didn’t, they were clear with me that I was an outlier and that there was no way that my team at Catholic Charities Fort Worth would feel the same. “Heather, social workers don’t want to work with researchers. What program manager would want to hear that their program isn’t successful?” Bill and Jim understood that, idealistically, we wanted to end poverty. But they were afraid that once we started to understand how research results might impact our staff, their jobs, and our programs, we would quickly become disinterested.

So, I invited them to Fort Worth to see for themselves. And my competitive crew at Catholic Charities Fort Worth didn’t disappoint. After a two-day visit, Bill and Jim knew we were serious about ending poverty—with every ounce of our organization’s soul. And for us, that was the moment. That was the moment we switched from doing good to dedicating ourselves to building evidence about what works.

Together, we launched Stay the Course, a program to help low-income individuals persist and complete community college. After one year of study, we had something new: results! Our dedication to eradicating poverty became rooted in a growing understanding of how to actually do it.

Our economist friends had something new as well. Living in a world of numbers, they began to see the faces and hear the stories of the people in their studies. I will never forget a banquet I attended with Jim and Bill where we had the opportunity to share Stay the Course with some Notre Dame supporters. After watching a video of a client served by the program, Bill turned to me, eyes filled with tears: “Heather, this is the most important thing I have ever professionally done.” I think we all felt that way.

Right here would be a beautiful wrap to our story. But it doesn’t end here. In fact, it takes a sharp turn south.

I was heading back to Notre Dame to present at a conference. As I was leaving, one of my team members stopped me. “Since you’ll be at Notre Dame, can you find out the ETA on our year-two research results? They’re a few months behind and we want to know how our clients are persisting.” I didn’t think twice about it, nodded and agreed to check in, and headed out the door.

That night, Jim and Bill sat me down. “Brace yourself,” they said with concerned looks on their faces. “The results for the treatment group and control group look no different after year two.”

I think they had to say it three times before it sunk in. All those fears that they had waved in front of me years before began to consume me. We were finding out that what we were doing wasn’t working. Stay the Course didn’t work. I realized that this kind of crushing news was what Jim and Bill were trying to warn me of early on in our partnership.

I tossed and turned all night.

The treatment group and control group look no different. Toss.

The treatment group and control group look no different. Turn.

The treatment group and control group look no different. Toss.

It was now four a.m., and my mind was racing. Then, I remembered my team member stopping me as I was heading out the door. “Since you’ll be at Notre Dame, can you find out the ETA on our year-two research results? They’re a few months behind and we want to know how our clients are persisting.”

Wait a minute. She asked me how our clients are persisting. Huh? Wouldn’t we know how our clients were persisting if we were providing the intensive case management that was the keystone of the entire program? A light bulb went on. Why would we need to be told how our clients were persisting? If we had the close relationships with our clients that the intervention was designed for, wouldn’t we know if they were still in school?

I returned to Fort Worth and shared the results. I was met with a laundry list of reasons why our clients may not have performed as well as those in the control group—our intervention didn’t work; our hypothesis was wrong; despite case management, our clients still couldn’t make it through.

“We want to know how our clients are persisting” was still ringing loudly in my head. I asked my team to run report after report and a new realization dawned on all of us. Our internal data was able to show us what types of encounters we were having with clients. And what we saw was that we weren’t providing intensive case management. The quality of our intervention had plummeted so much that over 80% of client encounters were being done through email blasts. Email blasts? Unless you’re announcing leftover pizza in the break room, email blasts rarely motivate anyone to do anything. Email blasts couldn’t be further away from intensive case management.

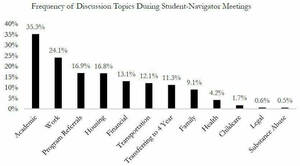

I called Notre Dame to share what we had learned. We all went to work to get our intervention back on track. I met with the donors who had invested in Stay the Course—never so embarrassed—to share the year two results and the “whys” behind them. We created internal monitoring tools to track the progress of our clients and the integrity of our services, ensuring we actually provided the case management we said we were giving to the treatment group. We put many new metrics in place: weekly personal contact via phone or text between each case manager and client; face-to-face meetings every two weeks; client progress on their action plans every 21 days, so we could understand how our clients were taking steps forward. We “blessed and released” some staff who were not eager to do business like we needed to do, and we retrained others with new expectations around what it looks like to provide case management. We relentlessly worked and monitored our intervention. Phone call, face-to-face meeting, client progress on their action plan. Repeat. When we missed the mark somewhere, we problem solved. When we were on track, we celebrated.

Year-three research results came, and they exceeded our expectations. Providing the intervention of intensive case management to our treatment group significantly affected their success when compared to the control group. Our clients were two times more likely to stay in school, and female clients were four times more likely to stay in school. Stay the Course worked!

Learning this was not just for learning’s sake. Learning this changed the way we did business at Catholic Charities Fort Worth. Not only did we get right to work to scale up our proven intervention, but we also started developing the right indicators to ensure we were providing our interventions with the utmost integrity in all of our services. This was key to living out our value of stewardship—spending investors’ money and our time in a way that delivered services as they were designed.

We all need to want to know. These answers—the good, the bad, the ugly—well, they give us the ability to improve and to scale up evidence-based solutions. And, those are the only services we should be providing and investing it—those that are proven to really work when it comes to changing everything for people in poverty